Observability-Driven Development: Unknown Unknowns

In the modern software landscape, the difference between a system that fails gracefully and one that cascades into catastrophic outages often comes down to one critical capability: observability. Yet most engineering teams confuse observability with monitoring, treating them as synonymous when they represent fundamentally different approaches to understanding system behavior.

Monitoring answers the question: "Is the thing I expected to break actually broken?" Observability answers: "Why is something unexpected happening?" The distinction matters because it shapes your entire instrumentation strategy. Observability vs. Monitoring in Distributed Systems - GeeksforGeeks

Monitoring vs. Observability: The Unknown Unknowns Problem

Monitoring is hypothesis-driven. You define metrics, thresholds, and alerts based on anticipated failure modes. When CPU exceeds 80%, alert. When response time breaches 500ms, escalate. When error rate spikes above 2%, page on-call. These are known unknowns—problems you've already identified and instrumentalized.

Observability, by contrast, is hypothesis-free. It provides enough system instrumentation that engineers can ask arbitrary questions about system state without predefined dashboards or alerts. If a mysterious request takes 14 seconds to complete, can you trace its journey through 12 microservices and identify the bottleneck at service 7's database query? That's observability.

The business impact is measurable. Organizations with mature observability practices report DORA | Capabilities: Monitoring and observability 46% faster mean time to resolution (MTTR) and 23% fewer customer-impacting incidents annually. These improvements directly correlate with engineering velocity—less time firefighting means more time shipping features.

The fundamental shift requires moving from reactive threshold-based alerting to high-cardinality, high-dimensionality data collection. Instead of "request count," you need "request count by endpoint, by client, by region, by user tier, by response code." This explosion of dimensionality is what enables hypothesis-free investigation.

The Three Pillars: Logs, Metrics, and Traces

Effective observability rests on three equally weighted pillars: logs, metrics, and traces. The problem is stark: most teams excel at one, adequately handle another, and nearly ignore the third.

Metrics measure aggregate system behavior. They're time-series data—numerical values at specific timestamps—designed for efficient storage and real-time dashboarding. A metric might be: "95th percentile latency = 342ms at 2024-01-15T14:32:00Z."

Logs capture discrete events with context. They're typically higher-cardinality than metrics but harder to aggregate. A log entry includes the event (request completed), structured context (user_id, endpoint, duration), and unstructured detail (error message, stack trace).

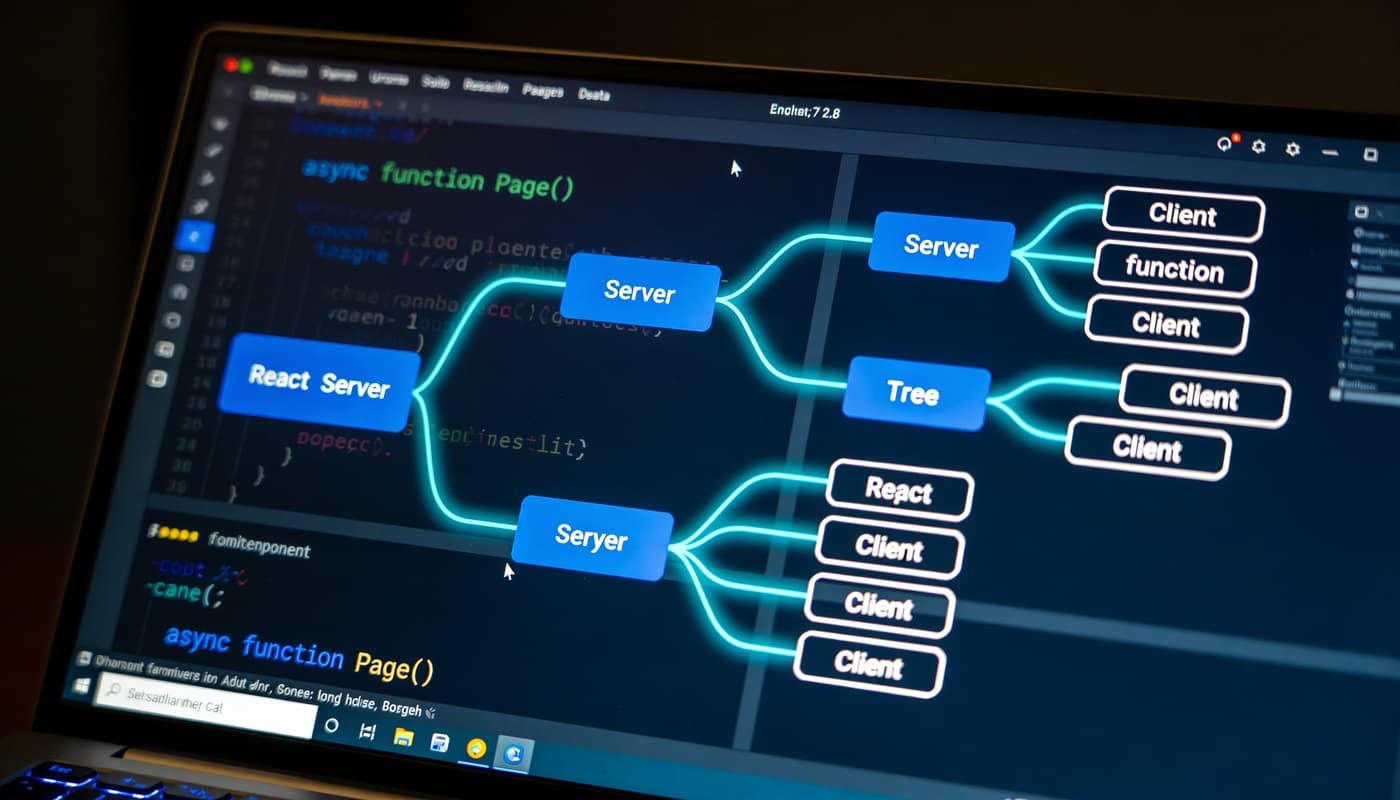

Traces connect the dots across distributed systems. A single user request might spawn 50 internal calls across 12 services. A trace ties all these together, showing the call graph, latencies at each hop, and error propagation. Microservices Pattern: Pattern: Distributed tracing

The gap exists because tracing infrastructure requires the most organizational lift. Logging is mature (ELK, Loki, Splunk). Metrics are commoditized (Prometheus, Datadog, New Relic). But distributed tracing demands code instrumentation, standardized propagation headers, and a tracing backend—and most teams tackle it last, if at all.

Structured Logging for 10x Faster Debugging

Unstructured logging—logger.info("User logged in with id " + userId)—is debugging's enemy. Parsing textual logs consumes time and introduces errors. Structured logging flips the paradigm.

{

"timestamp": "2024-01-15T14:32:15.247Z",

"level": "info",

"service": "auth-service",

"message": "user_login_success",

"user_id": "usr_7f4e82c",

"session_id": "sess_a1b2c3d",

"ip_address": "192.0.2.145",

"duration_ms": 142,

"mfa_enabled": true,

"tags": ["audit", "security"]

}

Compare that to its unstructured equivalent: 2024-01-15 14:32:15 User usr_7f4e82c logged in from 192.0.2.145 in 142ms with MFA enabled. The structured version is immediately queryable:

-- Unstructured nightmare

SELECT * FROM logs WHERE message LIKE '%User%logged in%'

AND message LIKE '%192.0.2.145%'

AND timestamp > '2024-01-15 14:30:00';

-- Structured clarity

SELECT * FROM logs WHERE service = 'auth-service'

AND message = 'user_login_success'

AND ip_address = '192.0.2.145'

AND timestamp > '2024-01-15 14:30:00';

Implement structured logging with semantic fields:

type LogEvent struct {

Timestamp time.Time `json:"timestamp"`

Level string `json:"level"`

Service string `json:"service"`

Message string `json:"message"`

TraceID string `json:"trace_id"`

SpanID string `json:"span_id"`

Fields map[string]interface{} `json:"fields"`

Tags []string `json:"tags"`

}

func logUserAction(traceID, spanID, userID string, action string, duration time.Duration) {

event := LogEvent{

Timestamp: time.Now(),

Level: "info",

Service: "user-service",

Message: action,

TraceID: traceID,

SpanID: spanID,

Fields: map[string]interface{}{

"user_id": userID,

"duration_ms": duration.Milliseconds(),

},

Tags: []string{"audit"},

}

// Marshal to JSON and ship to log aggregator

}

Key practice: always include trace_id and span_id in every log entry. This creates the bridge between logs and traces—when you see a transaction fail, you can instantly pivot from the log to the distributed trace.

Distributed Tracing Without Vendor Lock-In

Distributed tracing infrastructure is now standardized via OpenTelemetry OpenTelemetry | CNCF, an open-source initiative backed by the CNCF. Using OpenTelemetry prevents vendor lock-in while providing a unified instrumentation layer.

The pattern: trace ID propagates via HTTP headers, gRPC metadata, or message queues. Each service creates child spans within that trace. The OpenTelemetry SDK handles span creation and export.

import (

"go.opentelemetry.io/otel"

"go.opentelemetry.io/otel/trace"

"go.opentelemetry.io/otel/exporters/jaeger"

)

func createUserOrder(ctx context.Context, userID string, orderData map[string]interface{}) error {

tracer := otel.Tracer("order-service")

_, span := tracer.Start(ctx, "create_user_order")

defer span.End()

span.SetAttributes(

attribute.String("user.id", userID),

attribute.Int("order.items", len(orderData)),

)

// Downstream RPC automatically includes trace headers

user, err := userServiceClient.GetUser(ctx, userID)

if err != nil {

span.RecordError(err)

return err

}

// This RPC is automatically a child span in the same trace

order, err := persistOrder(ctx, user, orderData)

return err

}

Crucially, the same instrumentation code works with Jaeger, Honeycomb, Datadog, or any OpenTelemetry-compatible backend. You're not locked in. This is organizational leverage.

SLOs and Error Budgets: Engineering Management Tools

Service Level Objectives (SLOs) translate business requirements into engineering constraints. An SLO states: "This service will be available 99.9% of the time, measured over a rolling 30-day period."

This connects observability directly to product decisions through the error budget concept:

Error Budget = Total Time - Downtime Allowance

Allowed Downtime = (1 - SLO Target) × Time Period

99.9% SLO × 30 days = 0.1% × 2,592,000 seconds = 2,592 seconds (43.2 minutes)

If you've had 28 minutes of unplanned downtime this month, you have 15.2 minutes of error budget remaining. This is your permission to ship riskier features, deploy without waiting for optimal traffic windows, or run aggressive experiments. Google SRE - Error Budget Policy for Service Reliability

Teams using error budgets make better decisions:

SLI (Service Level Indicator) metrics directly feed this framework. For an API service:

- Availability SLI: (successful requests) / (total requests)

- Latency SLI: percentage of requests completing within 400ms

- Error Rate SLI: percentage of requests returning 2xx/3xx status codes

These aren't arbitrary dashboard metrics—they're contractual promises about system behavior.

Building an Observability Culture

Observability maturity reflects organizational culture, not just tooling. The most expensive observability stacks fail when teams treat observability as ops-only responsibility.

The shift requires:

1. Production ownership across engineering. Every developer ships code that lands in production. Production failures should feel immediate and personal. Implement "oncall rotation for features you shipped"—if you own the code, you own incident response for that service for one week quarterly. This creates powerful incentive alignment.

2. Bake observability into code review. Don't ask: "Is there sufficient logging?" Ask: "Can I debug a production issue with this code's instrumentation without deploying new code?" Before merging, verify:

- Trace ID present in all log entries

- Key business metrics (user_id, request_id) included in traces

- Error scenarios logged with sufficient context

- No debug logging in hot paths

3. Invest in observability literacy. Most engineers learn observability tools through crisis. Instead, run quarterly "observability workshops" where teams debug synthetic issues using traces, logs, and metrics together. This builds pattern recognition for production debugging.

4. Make observability visible in sprint planning. Reserve 15-20% of sprint capacity for observability work: adding traces to new services, reducing log cardinality, improving alert signal-to-noise ratios. Treat it as mandatory, not optional.

Research indicates 7 Incident Response Metrics and How to Use Them - SecurityScorecard teams with strong observability culture experience 40% fewer customer-impacting incidents, even controlling for infrastructure complexity.

Conclusion

Observability is not a tool problem—it's an architectural and cultural one. The transition from monitoring (known unknowns) to observability (unknown unknowns) requires unified instrumentation across logs, metrics, and traces; structured logging practices that enable forensic debugging; open standards like OpenTelemetry to avoid lock-in; and SLO-driven error budgets that align engineering velocity with reliability.

If your team measures observability maturity by "we have a Grafana dashboard," you're still in the monitoring era. True observability means any engineer can answer arbitrary questions about production behavior without predefined dashboards, and they can do it in under five minutes. That's the bar to build toward.

Ready to mature your observability practice? ClearPath Consultants specializes in helping enterprises architect observability strategies, implement structured instrumentation across complex microservice environments, and build the organizational practices that sustain high-cardinality observability at scale. Let's discuss how observability can transform your engineering culture and incident response capability.

Chief Technology Officer

Raymond brings over 15 years of experience leading enterprise technology transformations. Before joining ClearPath, he architected cloud migration strategies for Fortune 500 companies and led engineering teams at two successful SaaS startups. He specializes in helping mid-market businesses modernize their technology infrastructure without disrupting operations.